Alexander Güntert posted on LinkedIn about a new open-source project his colleague Hayal Oezkan had built: an MCP server for Zurich's open data. The post got quite some reactions and I liked the idea very much. But it still needed a local installation, not something non-developers easily know how to do. So I had it packaged and deployed on our servers, available for anyone to use as the "OGD City of Zurich" remote MCP server.

The City of Zurich publishes over 900 datasets as open data, spread across six different APIs. There's CKAN for the main data catalog, a WFS Geoportal for geodata, the Paris API for parliamentary information from the Gemeinderat, a tourism API, SPARQL linked data, and ParkenDD for real-time parking data. All public, all freely available. But until now, making an AI assistant actually use these APIs meant writing custom integrations for each one.

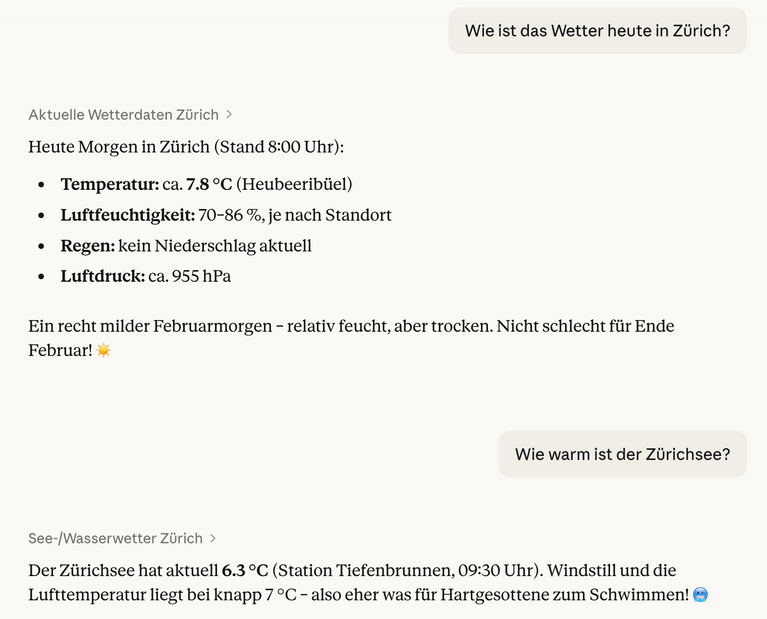

The MCP server wraps all six APIs into 20 tools that any MCP-compatible AI assistant can call directly. Ask "How warm is it in Zurich right now?" and it queries the live weather stations. Ask about parking availability, and it pulls real-time data from 36 parking garages. It also covers parliamentary motions, tourism recommendations, SQL queries on the data store, and GeoJSON features for school locations, playgrounds, or climate data. All through a single, standardized Model Context Protocol interface.

Hayal Oezkan built it in Python using FastMCP. One file for the server with all 20 tool handlers. The repo is on GitHub.

Deploying it on our side took very little effort. The server supports both stdio transport for local use (like in Claude Desktop or Claude Code) and SSE and HTTP Streaming for remote deployment. I packaged it with Docker, deployed it to our cluster, and now it's available as a remote MCP server that anyone can add to their AI tools without installing anything locally.

The natural next step was integrating this with ZüriCityGPT. It happened, just not quite in the direction I originally had in mind.

ZüriCityGPT already had its own MCP server at zuericitygpt.ch/mcp, exposing tools for searching the city's website content, "Stadtratsbeschlüsse" and looking up waste collection schedules. Instead of wiring the open data tools into ZüriCityGPT, I went the other way: the open data MCP server now proxies tools from the ZüriCityGPT MCP server. A lightweight proxy client connects to the remote server via streamable-http and forwards calls. The whole thing is about 40 lines of Python.

So now, when you connect to the Zurich Open Data MCP server, you get 23 tools in one place. The 21 original open data tools across six APIs, plus zurich_search for querying the city's knowledge base and zurich_waste_collection for waste pickup schedules (based on the OpenERZ API). One MCP endpoint, many services behind it.

A city employee builds something useful in the open, publishes the code, and within a day it's deployed and available to a wider audience. Open data and open source working together, exactly as intended.