Our client Statistik Stadt Zürich recently launched their Linked Open Statistical Data (LOSD) SPARQL endpoint. They publish the contents of their annual statistical yearbook as RDF using the DataCube vocabulary.

Since we build their Open Data portal data.stadt-zuerich.ch, which is based on CKAN, they asked us how we could publish their LOSD on the catalogue.

Why add Linked Data to a data catalogue?

A SPARQL endpoint allows you to ask a question that would normally require combining data from several datasets. But you already need to have an idea of which data exist and what properties they have. It can be difficult to browse to see which kind of data is available.

This is were a data catalogue like CKAN can shine. It is neatly organized, provides an easy-to-use search and can be the starting point for a data dive. Once you found an interesting dataset, you'll be refered to the SPARQL endpoint with an example query so you can start to adapt it to your needs.

You can compare it to Wikipedia and Wikidata:

- Wikipedia provides you with excellent articles about various topics (e.g. about the Eiffel Tower)

- Wikidata provides a query service to ask specific questions (e.g. what artwork depicts the Eiffel Tower)

As you can see, both sides have a right to exist and actually complement each other.

How could that work for RDF DataCube?

DataCube as dataset source

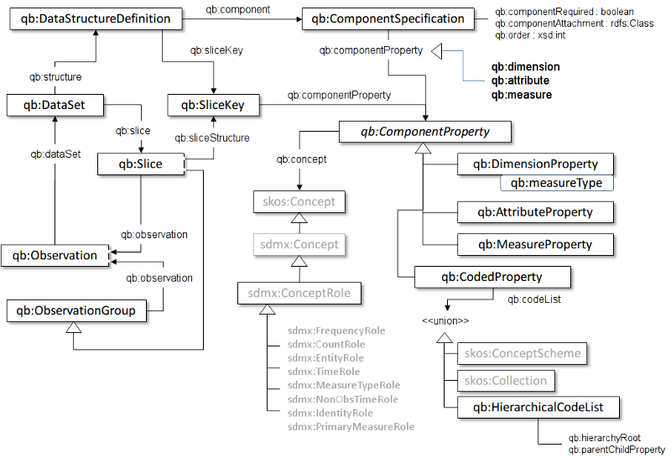

To get a rough understanding of the DataCube, this schema offers a helpful outline of the vocabulary:

Let's use the qb:DataSet as a CKAN dataset and try to find metadata for it.

First of all, we tried to extract datasets (as defined by the DataCube) in SPARQL:

PREFIX qb: <http://purl.org/linked-data/cube#>

PREFIX rdfs: <http://www.w3.org/2000/01/rdf-schema#>

SELECT ?dataset ?label WHERE {

# Zurich subgraph

GRAPH <https://linked.opendata.swiss/graph/zh/statistics> {

?dataset a qb:DataSet ;

rdfs:label ?label .

?obs <http://purl.org/linked-data/cube#dataSet> ?dataset .

}}

GROUP BY ?dataset ?label

LIMIT 1000This query extracts all qb:DataSet s with at least one observation. The dataset is a container for observations, one observation represents a measured value. As a result, we get all datasets that are available in the specified subgraph (https://linked.opendata.swiss/graph/zh/statistics) of this SPARQL endpoint.

So this gives us the "entries" in our catalogue. Now let's find some more metadata for those entries.

Extract metadata from LOSD

The next step we took is to extract as much metadata as possible to find a match between the DataCube metadata and the CKAN metadata used on data.stadt-zuerich.ch:

PREFIX qb: <http://purl.org/linked-data/cube#>

PREFIX skos: <http://www.w3.org/2004/02/skos/core#>

PREFIX rdfs: <http://www.w3.org/2000/01/rdf-schema#>

SELECT ?dataset ?title ?categoryLabel ?quelleLabel ?zeit ?updateDate ?glossarLabel ?btaLabel ?raumLabel

WHERE { GRAPH <https://linked.opendata.swiss/graph/zh/statistics> {

?dataset a qb:DataSet ;

rdfs:label ?title .

# group

OPTIONAL {

?category a <https://ld.stadt-zuerich.ch/schema/Category> ;

rdfs:label ?categoryLabel ;

skos:narrower* ?dataset .

}

# source, time, update date

?obs <http://purl.org/linked-data/cube#dataSet> ?dataset .

OPTIONAL {

?obs <https://ld.stadt-zuerich.ch/statistics/attribute/QUELLE> ?quelle .

?quelle rdfs:label ?quelleLabel .

}

OPTIONAL {

?obs <https://ld.stadt-zuerich.ch/statistics/property/RAUM> ?raum .

?raum rdfs:label ?raumLabel .

}

OPTIONAL { ?obs <https://ld.stadt-zuerich.ch/statistics/property/ZEIT> ?zeit } .

OPTIONAL { ?obs <https://ld.stadt-zuerich.ch/statistics/attribute/DATENSTAND> ?updateDate } .

# use GLOSSAR und BTA (and others) for tags

OPTIONAL {

?obs <https://ld.stadt-zuerich.ch/statistics/attribute/GLOSSAR> ?glossar .

?glossar rdfs:label ?glossarLabel .

}

OPTIONAL {

?obs <https://ld.stadt-zuerich.ch/statistics/property/BTA> ?bta .

?bta rdfs:label ?btaLabel .

}

FILTER (?dataset = <https://ld.stadt-zuerich.ch/statistics/dataset/AST-RAUM-ZEIT-BTA>)

}}

GROUP BY ?dataset ?title ?categoryLabel ?quelleLabel ?zeit ?updateDate ?glossarLabel ?btaLabel ?raumLabel

LIMIT 1000What’s going on here?

- The “FILTER” clause helps to narrow down the hits, in this case only return results for one specific dataset

- The “OPTIONAL” clause helps to declare triple patterns, that don’t have to match

- In our case, depending on the dataset an observation might have different properties (some have BTA, others have RAUM or GLOSSAR)

- Note that the category is modelled as a superset of a dataset using SKOS

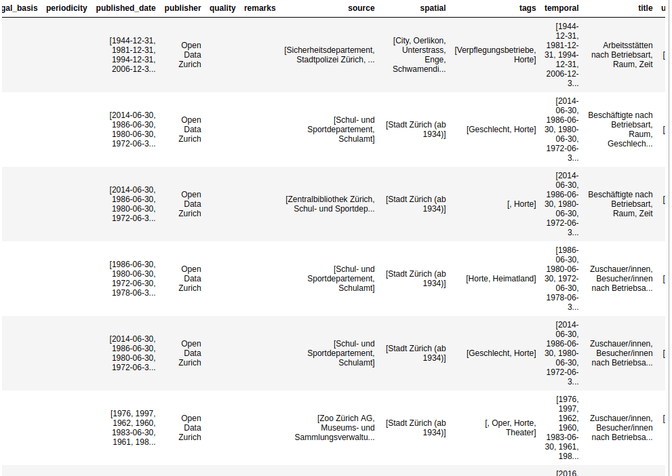

Now that we have extracted a bunch of metadata, we can match them to the metadata needed by CKAN. The result looks something like that:

Generate a SPARQL-Query to get the actual data

How can we generate a meaningful SPARQL query for each dataset? If we go back to the DataCube vocabulary, we can see that a dataset has a DataStructureDefinition. This helps us to uncover the structure of a dataset on a generic level. That means, given a dataset, we can extract the used properties of this dataset:

PREFIX rdf: <http://www.w3.org/1999/02/22-rdf-syntax-ns#>

PREFIX rdfs: <http://www.w3.org/2000/01/rdf-schema#>

PREFIX qb: <http://purl.org/linked-data/cube#>

SELECT ?dataset ?datasetLabel ?component ?componentLabel

FROM <https://linked.opendata.swiss/graph/zh/statistics>

WHERE {

?spec a qb:DataStructureDefinition ;

qb:component/(qb:dimension|qb:attribute|qb:measure) ?component .

?component rdfs:label ?componentLabel .

?dataset a qb:DataSet ;

rdfs:label ?datasetLabel ;

qb:structure ?spec .

FILTER (?dataset = <https://ld.stadt-zuerich.ch/statistics/dataset/AST-RAUM-ZEIT-BTA>)

} ORDER BY ?datasetThis returns, that this dataset uses the following properties:

- https://ld.stadt-zuerich.ch/statistics/property/BTA (Betriebsart)

- https://ld.stadt-zuerich.ch/statistics/measure/AST (Arbeitsstätten)

- https://ld.stadt-zuerich.ch/statistics/property/ZEIT (Zeit)

- https://ld.stadt-zuerich.ch/statistics/property/RAUM (Raum)

- https://ld.stadt-zuerich.ch/statistics/attribute/ERWARTETE_AKTUALISIERUNG (Erwartete Aktualisierung)

- https://ld.stadt-zuerich.ch/statistics/attribute/KORREKTUR (Korrektur)

- https://ld.stadt-zuerich.ch/statistics/attribute/DATENSTAND (Datenstand)

- https://ld.stadt-zuerich.ch/statistics/attribute/QUELLE (Quelle)

- https://ld.stadt-zuerich.ch/statistics/attribute/FUSSNOTE (Fussnote)

- https://ld.stadt-zuerich.ch/statistics/attribute/GLOSSAR (Glossar)

And in combination with the dataset, we can easily generate the following query automatically:

PREFIX rdf: <http://www.w3.org/1999/02/22-rdf-syntax-ns#>

PREFIX rdfs: <http://www.w3.org/2000/01/rdf-schema#>

PREFIX qb: <http://purl.org/linked-data/cube#>

SELECT * WHERE { GRAPH <https://linked.opendata.swiss/graph/zh/statistics> {

?obs a qb:Observation ;

qb:dataSet <https://ld.stadt-zuerich.ch/statistics/dataset/AST-RAUM-ZEIT-BTA> ;

<https://ld.stadt-zuerich.ch/statistics/attribute/ERWARTETE_AKTUALISIERUNG> ?erwartete_aktualisierung ;

<https://ld.stadt-zuerich.ch/statistics/attribute/KORREKTUR> ?korrektur ;

<https://ld.stadt-zuerich.ch/statistics/attribute/DATENSTAND> ?datenstand ;

<https://ld.stadt-zuerich.ch/statistics/measure/AST> ?arbeitsstatten ;

<https://ld.stadt-zuerich.ch/statistics/property/BTA> ?betriebsart ;

<https://ld.stadt-zuerich.ch/statistics/property/RAUM> ?raum ;

<https://ld.stadt-zuerich.ch/statistics/attribute/QUELLE> ?quelle ;

<https://ld.stadt-zuerich.ch/statistics/property/ZEIT> ?zeit ;

<https://ld.stadt-zuerich.ch/statistics/attribute/FUSSNOTE> ?fussnote ;

<https://ld.stadt-zuerich.ch/statistics/attribute/GLOSSAR> ?glossar .

}}This SPARQL query can then be refined by a user or simply used to extract the data as CSV.

Conclusion

- The metadata can be mapped, we have all necessary concepts in the DataCube

- Since a dataset consists of many observations and in LOSD the metadata is mostly attached to the observation, we suddenly have several values for each dataset. So the data must be aggregated somehow (concatenate all values, always pick the first value, extend the catalogue to accept multiple values)

- Some fields are empty, so they either need to be updated in LOSD or another source for the data must be found

- It’s easy to generate a meaningful SPARQL query for a dataset (to help users explore the LOSD or to extract the data as CSV)

Please find the whole source code of our prototype as a jupyter notebook on GitHub.